PROCEEDINGS ARE NOW AVAILABLE ON CEUR: HAI-GEN+user2agent 2020

This workshop will be held virtually via WebEx on March 17 East Coast time zone (EDT) starting 9 AM EDT. Use the Time Zone Converter if in doubt. We changed to daylight savings time last weekend, i.e. we are EDT not EST.

Agenda

9:00 - 9:25 Opening & Introductions

9:25 - 10:10 Keynote 1 by Douglas Eck: Challenges in Building ML Algorithms for the Creative Community

Session Chair: Werner Geyer

10:10 - 10:50 Session 1 - Generative Music

Session Chair: Lydia Chilton

[20 mins] Cococo: AI-Steering Tools for Music Novices Co-Creating with Generative Models Ryan Louie, Andy Coenen, Cheng-Zhi Anna Huang, Michael Terry and Carrie Cai

[20 mins] Latent Chords: Generative Piano Chord Synthesis with Variational Autoencoders Agustin Macaya, Manuel Cartagena, Rodrigo Cadiz and Denis Parra

10:50 - 11:20 Coffee Break

11:20 - 12:30 Session 2 - Generative Text, Images, and Drawing

Session Chair: Adam Tauman Kalai

Paper & Demo

[20 mins] How Novelists Use Generative Language Models: An Exploratory User Study Alex Calderwood, Katy Ilonka Gero and Lydia B. Chilton

[10 mins] Demo: Literary Style Transfer with Content Preservation Katy Gero, Chris Kedzie and Lydia B. Chilton

Invited Guest Speakers

[20 mins] Draw with Me: Human-in-the-Loop for Image Restoration Thomas Weber, Zhiwei Han, Stefan Matthes, Yuanting Liu and Heinrich Hussmann

[20 mins] Creative Sketching Partner: An Analysis of Human-AI Co-Creativity Pegah Karimi, Jeba Rezwana, Safat Siddiqui, Mary Lou Maher, Nasrin Dehbozorgi

12:30 - 14:00 Lunch

14:00 - 14:45 Keynote 2 by Hendrik Strobelt: Visual Human-AI collaboration tools

Session Chair: Ranjitha Kumar

14:45 - 15:05 Session 3 - The Dark Side of Generative Approaches

Session Chair: Ranjitha Kumar

[20 mins] Business (mis)Use Cases of Generative AI Stephanie Houde, Vera Liao, Jacquelyn Martino, Michael Muller, David Piorkowski, John Richards, Justin Weisz and Yunfeng Zhang

15:05 - 15:30 Closing / Wrap-Up

Keynote 1

Challenges in Building ML Algorithms for the Creative Community

Douglas Eck, Google AI, Magenta Team

Douglas Eck, Google AI, Magenta Team

Abstract

Magenta is an open-source project exploring the role of machine learning as a tool in the creative process.We’ve been running in public (g.co/magenta) for almost four years. This talk will look back at successes and frustrations in bringing our work to creators, mostly musicians. I’ll also talk about some current and future work. Magenta is made up of several ML researchers and engineers on the Google Brain team, which focuses on deep learning. Our successes have mostly been in the area of new algorithm development (NSynth, MusicVAE, Music Transformer, DDSP and others). Our frustrations have been in finding ways to make these models useful for music creators. The talk will be a casual example-driven discussion about what worked and what didn’t, and where we’re going next. Spoiler: we have been humbled by the user interface challenges encountered when building tools for creative work. However we think we’re turned the corner on this, in no small part thanks to more engagements with HCI experts like those who attend IUI.

Bio

Douglas Eck is a Principal Scientist at Google Research, currently working in the Paris office. He is leading the Magenta Project, a Google Brain effort to create music, video, images and text using deep learning and reinforcement learning. He is also exploring related research in generative models for domains like open-ended dialog and video generation. Before focusing on generative models for media, Doug worked in areas such as music perception, aspects of music performance, machine learning for large audio datasets and music recommendation. He completed his PhD in Computer Science and Cognitive Science at Indiana University in 2000 and went on to a postdoctoral fellowship with Juergen Schmidhuber at IDSIA in Lugano Switzerland. Before joining Google in 2010, Doug was faculty in Computer Science at the University of Montreal (MILA machine learning lab) where he became Associate Professor.

Keynote 2

Visual Human-AI collaboration tools

Hendrik Strobelt, IBM Research AI, Visual AI Lab

Hendrik Strobelt, IBM Research AI, Visual AI Lab

Abstract

While there is a fear of loss of human agency for the future of AI, we want to provide tools and frameworks to maximize the synergies of human capabilities and AI capabilities. In this talk, I will show examples of human-AI collaboration tools for generating content (using a GAN) and analyzing content (using an NLP model). Finally, I will present a framework, called collaborative semantic inference, that can be a guide for future human-AI systems.

Bio

Hendrik joined IBM Research in 2017 after postdocs at Harvard SEAS and New York University. He holds a Ph.D. from the University of Konstanz and an MSc (Diplom) from the University of Technology in Dresden. He received multiple awards for publications in the field of information visualization and received the Lohrman medal (highest student honor) from TU Dresden. His research focuses on visualization, visual analytics for AI, NLP, and biomedical data - recently also human-AI collaboration approaches. He is a visiting researcher at MIT

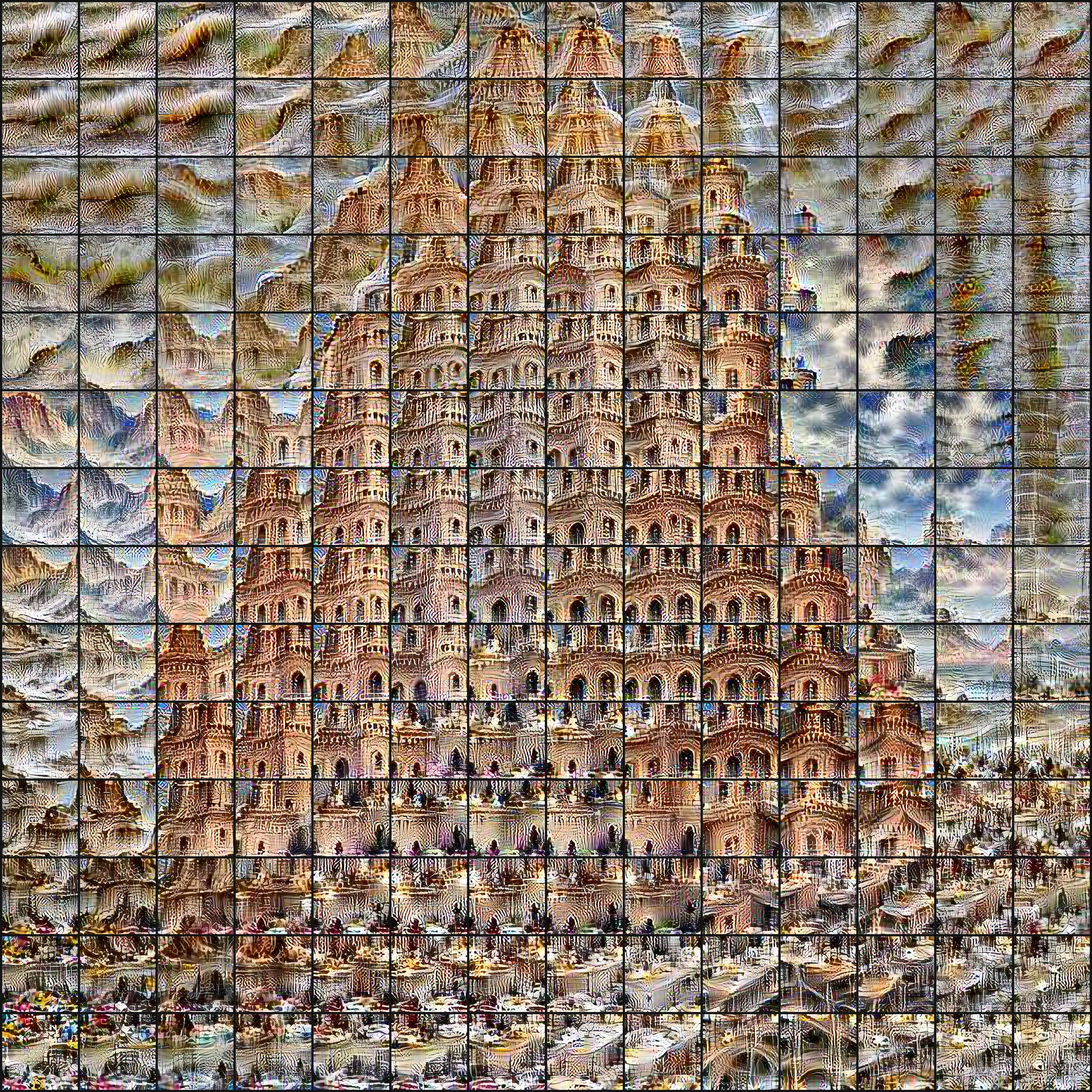

The Tower of Babel. Generative Art by Mauro Martino.

Workshop Description

Recent advances in generative modeling through deep learning approaches such as generative adversarial networks (GANs), variational autoencoders (VAEs), and sequence-to-sequence models will enable new kinds of user experiences around content creation, giving us “creative superpowers” and move us toward co-creation and curation. While the areas of computational design, generative design, and computational art have existed for some time, content with unprecedented fidelity is now being produced due to breakthroughs in generative modeling using deep learning. Ian Goodfellow’s work on face generation and StyleGan, OpenAI’s GPT-2, or recent deep fake videos of Mark Zuckerberg and Bill Gates are prominent examples of content generated by AI that is almost indistinguishable from human-generated content. These examples also highlight some of the significant societal, ethical and organizational challenges generative AI is posing including security, privacy, ownership, quality metrics and evaluation of generated content.

The goal of this half-day workshop is to bring together researchers and practitioners from both fields HCI and AI to explore and better understand both the opportunities and challenges of generative modelling from an HCI perspective. We envision that the user experience of creating both physical and digital artifacts will become a partnership of humans and AI: Humans will take the role of specification, goal setting, steering, high-level creativity, curation, and governance. AI will augment human abilities through inspiration, low level creativity and detail work, and the ability to test ideas at scale.

Submissions are encouraged but not limited to the following topics:

- Novel user experiences supporting the creation of both physical and digital artifacts in an AI augmented fashion

- Business use cases of generative models

- Novel applications of generative models

- Techniques, methodologies & algorithms that enable new user experiences and interactions with generative models and allow for directed and purposeful manipulation of the model output

- Governance, privacy, content ownership

- Security including forensic tools and approaches for deep fake detection

- Evaluation of generative approaches and quality metrics

- User studies

- Lessons learned from computational art and design, and generative design and how these impact research

Cathedral in Cagliari, Sardinia, Co-Creation Experience with GANPaint Studio by Hendrik Strobelt et al.

Submission Guidelines

We are encouraging submissions of full and short papers following the IUI Paper Guidelines as well as demos of generative deep learning systems that highlight co-creation user experiences or other topics listed above. The submission of demos also follows IUI Demo Guidelines.

All papers will be peer reviewed, single blind (i.e. author names and affiliations should be listed). If accepted, at least one of the authors must attend the workshop to present the work.

A workshop summary will be included in the ACM Digital Library for IUI 2020. While papers and demos are not part of the archival ACM IUI proceedings, we will be published them online at CEUR Workshop Proceedings.

Please submit your papers & demos to EasyChair: https://easychair.org/my/conference?conf=haigen2020#

Important Dates

December 20, 2019: Paper & Demo Submissions Due

January 14, 2020: Author Notification

February 18, 2020: Camera-Ready Version of Papers and Demos Due

March 17: Workshop Day Yay

Organizing Committee

- Werner Geyer, IBM Research AI, Cambridge, MA

- Lydia Chilton, Columbia University

- Ranjitha Kumar, University of Illinois at Urbana-Champaign

- Adam Tauman Kalai, Microsoft Research, Cambridge, MA

Contact: hai-gen2020@service.microsoft.com

Program Committee

- Nancy Baym, Microsoft Research

- Zoya Bylinskii, Adobe Research

- Carrie Cai, Google

- Elizabeth Clark, University of Washington

- Sebastian Gehrmann, Harvard School of Engineering

- Katy Gero, Columbia University

- Per Ola Kristensson, University of Cambridge

- Jacquelyn Martino, IBM Research AI

- Mauro Martino, IBM Research AI

- Michael Mateas, University of California, Santa Cruz

- Antti Oulasvirta, Aalto University

- Dafna Shahaf, Hebrew University of Jerusalem

- Akash Srivastava, IBM Research AI

- Hendrik Strobelt, IBM Research AI

- Michael Terry, Google

- Steven Wu, University of Minnesota

- Haiyi Zhu, Carnegie Mellon University